The importance of data in a digital transformation context is known to everyone. Actually getting control and properly governing this new oil does not happen automatically. In this article we have summarized the top 5 Data Governance mistakes and also give you a number of tips on how to avoid them.

1. Data Governance is not business driven

Who is leading your Data Governance effort? If your initiative is driven by IT, you dramatically limit your chance of success. A Data Governance approach is a company-wide initiative and needs business & it support. It also needs support from the different organizational levels. Your executive level needs to openly express support in different ways (sponsorship but also communication). However this shouldn’t be a top down initiative and all other involved levels will also need to be on board. Keep in mind that they will make your data organization really happen.

2. Data Maturity level of your organization is unknown or too low

Being aware of the need for Data Governance is one thing. Being ready for Data Governance is a different story. In that sense it is crucial to understand the data maturity level of your organization.

There are several models to determine your data maturity level, but one of the most commonly used is the Gartner model. Surveys reveal that 60% of organizations rank themselves in the lowest 3 levels. Referring to this model, your organization should be close (or beyond) the systematic maturity level. If you are not, make sure to first fix this before taking next steps in your initiative. You need to have these basics properly in place. Without this minimum level of maturity, it doesn’t really makes sense to take the next steps. You don’t build a house without the necessary foundations.

3. A Data Governance Project rather than Program approach

A substantial amount of companies tend to start a Data Governance initiative as a traditional project. Think about a well-defined structure, the effort and duration is well known, the benefits have been defined, … When you think about Data Governance or data in general, you know that’s not the case. Data is dynamic, ever changing and it has far more touch points. Because of this, a Data Governance initiative doesn’t fit a traditional focused project management approach. What does fit is a higher level program approach in which you could have defined a number of project streams that focus on one particular area. Some of these streams can have a defined duration (i.e. implementation of a business glossary). Others (i.e. change management) can have a more ongoing character.

4. Big Bang vs Quick Win approach

Regardless of the fact that you have a proper company-wide program in place, you have to make sure that you focus on the proper quick wins to inspire buy-in and help build momentum. Your motto should not be Big Bang but rather Big Vision & Quick Wins.

Data Governance requires involvement from all levels of stakeholders. As a result you need to make everyone clear what your strategy & roadmap looks like.

With this type of programs you need to have the required enthusiasm when you take your first steps. It is key that you keep this heart beat in your program and for that reason you need to deliver quick wins. If you don’t do that, you strongly risk losing traction. Successfully delivering quick wins helps you gain credit and support with future steps.

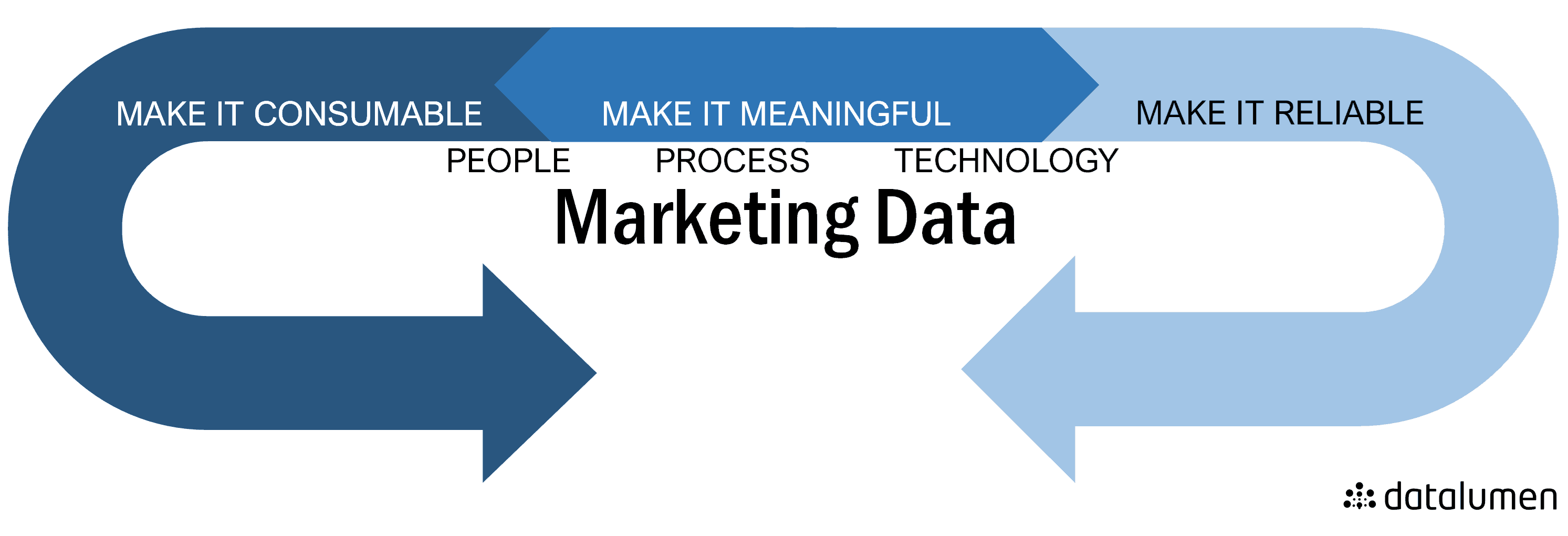

5. No 3P mix approach

Data Governance has important People, Process and Platform dimensions. It’s never just one of these and requires that you pay the necessary attention to all of them.

- When you implement Data Governance, people will almost certainly need to start working in a different way. They potentially may need to give up exclusive data ownership … All elements that require strong change management.

- When you implement Data Governance you tilt your organization from a system silo point of view approach to a data process perspective. The ownership of your customer data is no longer just the CRM or a Marketing Manager but all the key stakeholders involved in customer related business processes.

- When you want to make Data Governance a success you need to make it as efficient and easy as possible for every stakeholder. This implies that you should also thoroughly think about how you can facilitate them in the best possible way. Typically, this implies looking beyond traditional Excel, Sharepoint, Wiki type solutions and looking into implementing platforms that support your complete Data Governance community.

Also in need for data governance?Would you like to know how Datalumen can also help you get your data agenda on track? Contact us and start our data conversation.