THE DATA SHARING IMPERATIVE: WHY DATA MARKETPLACES ARE YOUR NEXT BIG MOVE

Data & Analytics (D&A) leaders need to demonstrate the tangible business value from their D&A and AI initiatives, including the rapidly evolving field of Generative AI (GenAI). As organizations strive to maximize the potential of their data assets, many are turning to innovative solutions like data marketplaces and exchanges. These platforms offer a powerful means to accelerate both tangible and intangible financial value from data use while meeting the growing demands for expansive data sharing and monetization.

The Data Value Dilemma

D&A leaders are under increasing pressure to show concrete returns on investment in data and AI technologies. However, quantifying the value of data assets and AI outcomes can be rather challenging. Traditional metrics often fall short in capturing the full spectrum of benefits that data-driven initiatives bring to an organization.

Enter Data Marketplaces – The Storefront for Data Consumers

Data providers often seek to monetize their data assets. Consumers enter data marketplaces looking for data that can benefit their business. For example, a GPS navigation company could be a data provider offering traffic-related data such as historical congestion and emissions reports to consumers on public data marketplaces. Data consumers can then use this traffic data to meet their specific business needs, such as helping a retail business optimize traffic planning or gain better insights into their sustainability indicators.

Considering who provides the data, these platforms come in two primary forms:

- Internally managed

Internally managed data marketplaces facilitate data sharing and collaboration within an organization. While primarily set up for internal use, many of these marketplaces can also consume data from external data markets and exchanges to some degree. Today, over 70% of internally managed marketplaces serve only internal consumers. About 30% of these marketplaces are already monetizing their data and commercializing it on the external market. For example, retailers use their internal data marketplaces to commercialize consumer data to their FMCG suppliers.

- Externally managed

These data marketplaces, also referred to as data exchanges, enable data transactions between different organizations. Examples of data exchanges include the Nielsen Marketing Cloud, Dun & Bradstreet, Precisely and Experian. These platforms offer a wide range of data types, including demographic and psychographic information, consumer behavior and preferences, purchasing history, and credit information. In addition to these commercial platforms, more public and open data is becoming available. Examples include data.europe.be the portal for European data, as well as numerous national and local gov, market-specific, and even organizational initiatives like i.e. the Infrabel Open Data Portal , which can be integrated in your data initiatives.

Unlocking the Advantages

By leveraging these platforms, businesses can unlock several key advantages:- Enhanced Data Discovery and Access

Data marketplaces make it easier for users across an organization to find and access relevant data sets. This improved discoverability can lead to faster decision-making processes, reduced duplication of efforts, and increased cross-departmental collaboration. - Data Monetization Opportunities

External data exchanges open up new revenue streams by allowing organizations to monetize their data assets. This can include selling anonymized customer insights, offering industry-specific datasets, and providing real-time data feeds. The same principle can also be applied to internal data sharing efforts where departments or sister companies also agree on an inter-company cost compensation mechanism. - Improved Data Quality and Governance

To participate in data marketplaces, organizations must adhere to certain quality standards and governance practices. This drive towards better data management can result in enhanced data accuracy and reliability, stronger compliance with data regulations, and increased trust in data-driven decision making. - Accelerated Innovation

Access to diverse datasets through marketplaces can fuel innovation, especially in AI and GenAI applications. Benefits include more comprehensive training data for AI models, novel insights from combining internal and external data sources, and faster development of data-driven products and services.

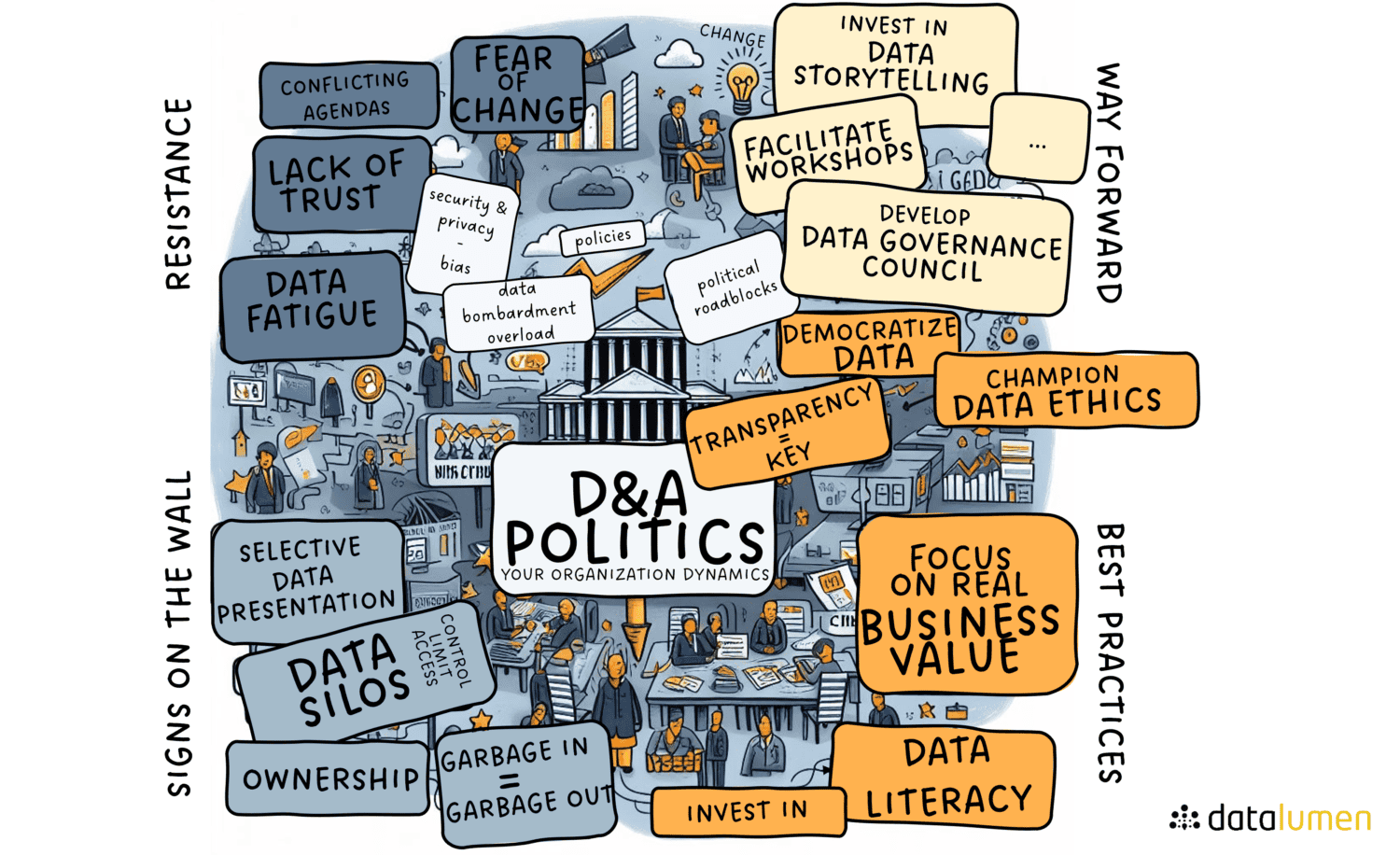

Overcoming Implementation Challenges

Measuring Success

Conclusion